By: Janice Lopez

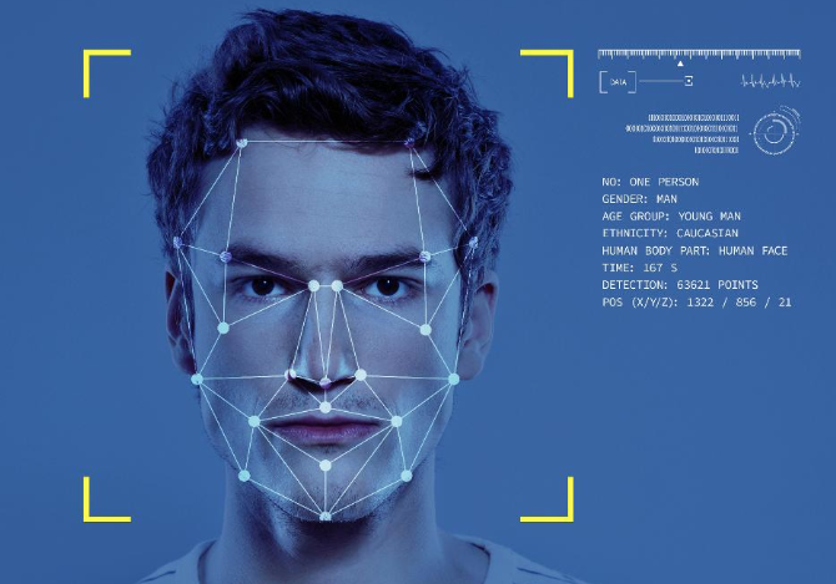

On January 22, 2020, a federal class action was filed against Clearview AI in the Northern District of Illinois for gathering biometric information on millions of American citizens.[1] Clearview markets itself as a research tool that helps law enforcement officials find criminals and identify victims.[2] Clearview’s technology allows a user to upload a photo of someone into a database, at which point an algorithm is used to identify the individual based on other publicly available images of the same individual.[3] Clearview has over 3 billion images from millions of websites such as Facebook and Twitter—this information was used to compile a database of individuals’ facial attributes.[4] Biometric facial recognition is a method of measuring certain characteristics and then comparing that measurement to a database to confirm who someone is.[5] Clearview has been working with law enforcement agencies by granting them access to their database for a fee.[6] Law enforcement has used Clearview to solve petty thefts, fraud, murder, and child sexual exploitation cases.[7]

Plaintiff David Mutnick brought suit on behalf of all American citizens for the violation of the First Amendment, Fourth Amendment, Fourteenth Amendment, and the Contracts Clause of Article I.[8] Facial recognition software threatens to have the same kind of “chilling effect” on free speech that the Supreme Court was concerned with in Gibson v. Florida Legislative Investigation Committee, where the Court held that the government may not compel the production of an organization’s membership list for investigative purposes.[9] Research has shown that when people believe the government is watching them, they change their behavior, and this phenomenon disproportionately affects people of color and other marginalized groups.[10] Other major concerns with facial recognition software, from a policy perspective, are the possibility for erroneous identification and the lack of explicit consent for someone’s face to be held in a database by Clearview.[11] Mutnickcomes only months after the case Patel v. Facebook, wherein the Ninth Circuit was the first appellate court in the United States to hold that individuals may have standing when websites use facial recognition software to monitor them.[12] The court found that people have privacy rights in the biometric information that companies like Facebook collect to identify individuals.[13] The court also recognized the potential harms that could arise as facial recognition technology continues to advance.[14]

The plaintiff also brought suit specifically under the Illinois Biometric Privacy Information Act (BIPA).[15] Illinois’ BIPA law is one of the most important privacy laws in place because there is no federal law placing limits on the collection of biometric data.[16] Under BIPA, private entities may not collect biometric information without consent, sell such information to third parties, or otherwise disclose the biometric information.[17] Private entities that have biometric information are required under BIPA to develop a publicly available policy that includes retention schedules and “guidelines for permanently destroying biometric identifiers and biometric information.”[18] Furthermore, a private entity cannot collect a person’s biometric data unless it receives written consent from the person whose data it wishes to obtain.[19] Patelhas major implications for Mutnick, which was brought in federal court, where a plaintiff must show they have standing under Article III of the Constitution, which includes showing injury-in-fact. However, BIPA is a state law, and it proffers that a person has standing when a company violates its requirements.[20] Thus, injury under BIPA was not necessarily the same as injury under Article III. Patel’s holding clarified that the violation of BIPA does warrant standing for the purposes of the Article III analysis because privacy violations may cause injury.[21] This allows a plaintiff to have standing in federal court, and not only in state court.

In light of Mutnick, other stakeholders are increasingly concerned about the potential privacy violations that may arise through Clearview’s image collection efforts and the use of Clearview’s services by law enforcement personnel.[22] Recently, Twitter sent Clearview a cease and desist letter on the grounds that including images from Twitter in its database violates the network’s terms of service.[23] The attorney general of New Jersey sent a similar letter to Clearview, and has called on the New Jersey police department to refrain from using Clearview’s facial recognition software.[24] Currently, California, New Hampshire, and Oregon ban police officers from using cameras with facial recognition software.[25]

A number of major companies, such as Facebook and Google, sell biometric data for a profit, which is used to create targeted advertisements in accordance with individual profiles.[26]Similarly, Clearview’s technology uses individuals’ private biometric information for profit by selling their data to law enforcement agencies.[27] Currently, there is no federal law protecting people from corporations that seek to collect and use personal information for profit, and what this ultimately means is that there are no meaningful limits—except in a small minority of states—about when private biometric data can be bought and sold without a person’s knowledge or consent.

Twitter: @findingjanice

Facebook: Janice Lopez

[1]Complaint at 1, Mutnick v. Clearview AI, Inc.(N.D. Ill. 2020) (No. 1:20 Civ. 00512).

[2]Kashmir Hill, The Secretive Company That Might End Privacy as We Know It, New York Times (Jan. 18, 2020), https://www.nytimes.com/2020/01/18/technology/clearview-privacy-facial-recognition.html.

[3]Id.

[4]Id.

[5]Bruce Schneier, We’re Banning Facial Recognition. We’re Missing the Point., New York Times (Jan. 20, 2020), https://www.nytimes.com/2020/01/20/opinion/facial-recognition-ban-privacy.html?searchResultPosition=1.

[6]Complaint, supranote 1, at 2.

[7]Kashmir Hill, The Secretive Company That Might End Privacy as We Know It, New York Times (Jan. 18, 2020),https://www.nytimes.com/2020/01/18/technology/clearview-privacy-facial-recognition.html.

[8]Complaint, supranote 1, at 3.

[9]Gibson v. Fla. Legislative Investigation Comm., 372 U.S. 539, 557 (1963).

[10]Jennifer Lynch, Clearview AI—Yet Another Example of Why We Need A Ban on Law Enforcement Use of Face Recognition Now, EFF (Jan. 31, 2020) https://www.eff.org/deeplinks/2020/01/clearview-ai-yet-another-example-why-we-need-ban-law-enforcement-use-face.

[11]Kate O’Flaherty, Clearview AI’s Database Has Amassed 3 Billion Photos. This Is How If You Want Yours Deleted, You Have To Opt Out, Forbes (Jan. 26, 2020) https://www.forbes.com/sites/kateoflahertyuk/2020/01/26/clearview-ais-database-has-amassed-3-billion-photos-this-is-how-if-you-want-yours-deleted-you-have-to-opt-out/#72b2583d60aa.

[12]Blake A. Klinkner, Facial Recognition Technology, Biometric Identifiers, and Standing to Litigate Invasions of Digital Privacy, Wyo. Law., October 2019 at 44.

[13]Patel v. Facebook, Inc., 932 F.3d 1264, 1273 (9th Cir. 2019).

[14]Id. at 1272.

[15]Complaint, supranote 1, at 4.

[16]Susan Crawford, Facial Recognition Laws Are (Literally) All Over the Map, Wired(Dec. 16, 2019) https://www.wired.com/story/facial-recognition-laws-are-literally-all-over-the-map/.

[17]740 Ill. Comp. Stat. Ann. 14/15 (West 2020).

[18]Id.

[19]Id.

[20]Id.

[21]Patel, 932 F.3d 1264, 1267.

[22]Kashmir Hill, New Jersey Bars Police From Using Clearview Facial Recognition App, New York Times (Jan. 24, 2020) https://www.nytimes.com/2020/01/24/technology/clearview-ai-new-jersey.html.

[23]Id.

[24]Id.

[25]Max Read, Why We Should Ban Facial Recognition Technology, New York Magazine (Jan. 30, 2020) https://nymag.com/intelligencer/2020/01/why-we-should-ban-facial-recognition-technology.html.

[26]Bruce Schneier, We’re Banning Facial Recognition. We’re Missing the Point., New York Times (Jan. 20, 2020), https://www.nytimes.com/2020/01/20/opinion/facial-recognition-ban-privacy.html?searchResultPosition=1.

[27]Kashmir Hill, New Jersey Bars Police From Using Clearview Facial Recognition App, New York Times (Jan. 24, 2020) https://www.nytimes.com/2020/01/24/technology/clearview-ai-new-jersey.html.